Machine learning is grappling with objects and texture

Artificial intelligence (AI) has been improving at a fast pace in the last few years to the point that it is now being used to identify shapes and objects, especially in images. This can be very useful when used by a graphic designer in a design project. However, AI is easily fooled when it is shown an object with a texture which is other than its normal one and it is these quirks and glitches that the software developers will have to iron out if designers are to really make the most of what is available to them.

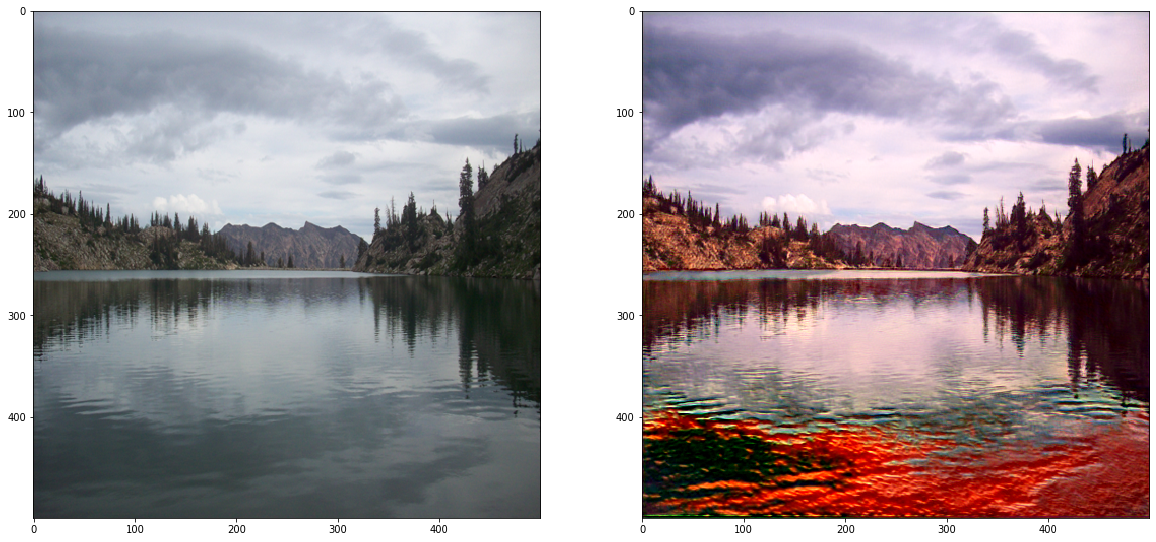

Texture can be the real bogey when it comes to technology recognizing what an object is or what it really should be. Neural networks which have been trained to recognize objects tend to recognize things based on texture rather than shape. That means that if a person removes or distorts the texture of an object, and the whole software comes unstuck.

In the recent past, a lot of new image-scrutinizing convolutional neural network (CNN) architectures became available and by 2017 the majority of them had an accuracy rate of over 95 per cent. If they were shown a photograph, they would be able to find out with confidence what that object or creature was in the picture.

These days, it’s not hard for developers and businesses to merely make use of off-the-shelf models which have been trained on the ImageNet dataset to decipher whatever problems of image recognition that they might encounter, if this be figuring out which kind of animals are in a photograph, or sizing up what articles of clothing are in a snap.

On the other hand, CNNs can be easily misled. If a small part of the pixels in a photograph have been changed, then the software being used will not be able to recognize that object in the right way. What was an apple now looks like an elephant to the AI only after changing around a few colors.

And why does this happen? It is possible that artificial intelligence hones in too much on texture, allowing alterations in patterns in the image to pull the wool over the eyes of the classifier software.

Researchers came up with a series of simple tests to examine how people and machines come to terms with visual abstracts.

The results of the test proved that nearly all the images that kept the objects’ shape and texture were recognized in the right way by both the neural networks and the humans. However, when the test involved manipulating or taking away the texture of the objects in question, the machines lagged far behind. The software was not able to work with the shape of the objects by themselves.

It seems that humans can recognize objects just by their general shape, while machines have to take into account lesser details and in particularly the texture of an object. When tasked with having to identify objects with a false texture, such as a dog with the skin of an elephant for example, the human participants in the test were correct 95.9 per cent on average, but the neural networks only came up with a score somewhere between 17.2 per cent to 42.9 per cent.

The problem that machines encounter may lie in the dataset. ImageNet itself has more than 14 million images of objects divided over a lot of categories, but this is not sufficient in itself, there are apparently not enough angles and other insights. The software which has been trained with this information is not able to comprehend how things are really made, formed and proportioned.

The algorithms that are being used have the ability to tell types of butterfly from the patterns on their wings, but if that detail has been taken away, then the code apparently has no clue what it is really looking at. It is hoped that with further research, AI and machine learning will be able to iron out the creases and be more useful to users in the future.